本帖隐藏的内容

IntroductionMany people have asked me this question that whenever they get started with data science, they get stuck with the manifold of tools available to them.

Although there are handful of guides available out there concerning the problem such as “19 Data Science Tools for people who aren’t so good at Programming” or “A Complete Tutorial to Learn Data Science with Python from Scratch“, I would like to show what tools I generally prefer for my day-to-day data science needs.

Read on if you are interested!

Note: I usually work in Python and in this article I intend to cover the tools used in python ecosystem on Windows.

Table of Contents

- What does a “data science stack” look like?

- Case Study of a Deep Learning problem: Getting started with Python Ecosystem

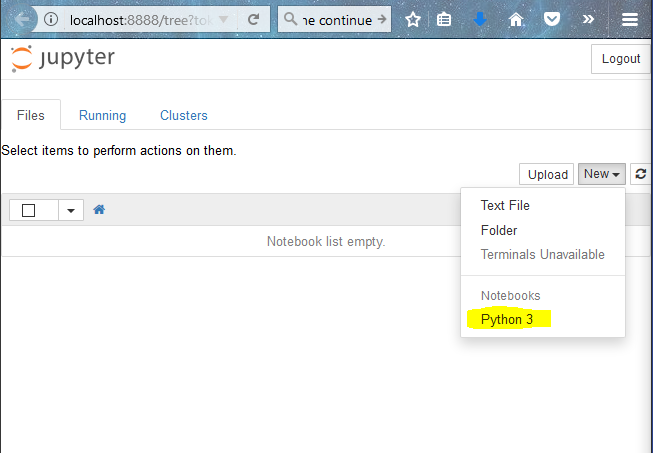

- Overview of Jupyter: A Tool for Rapid Prototyping

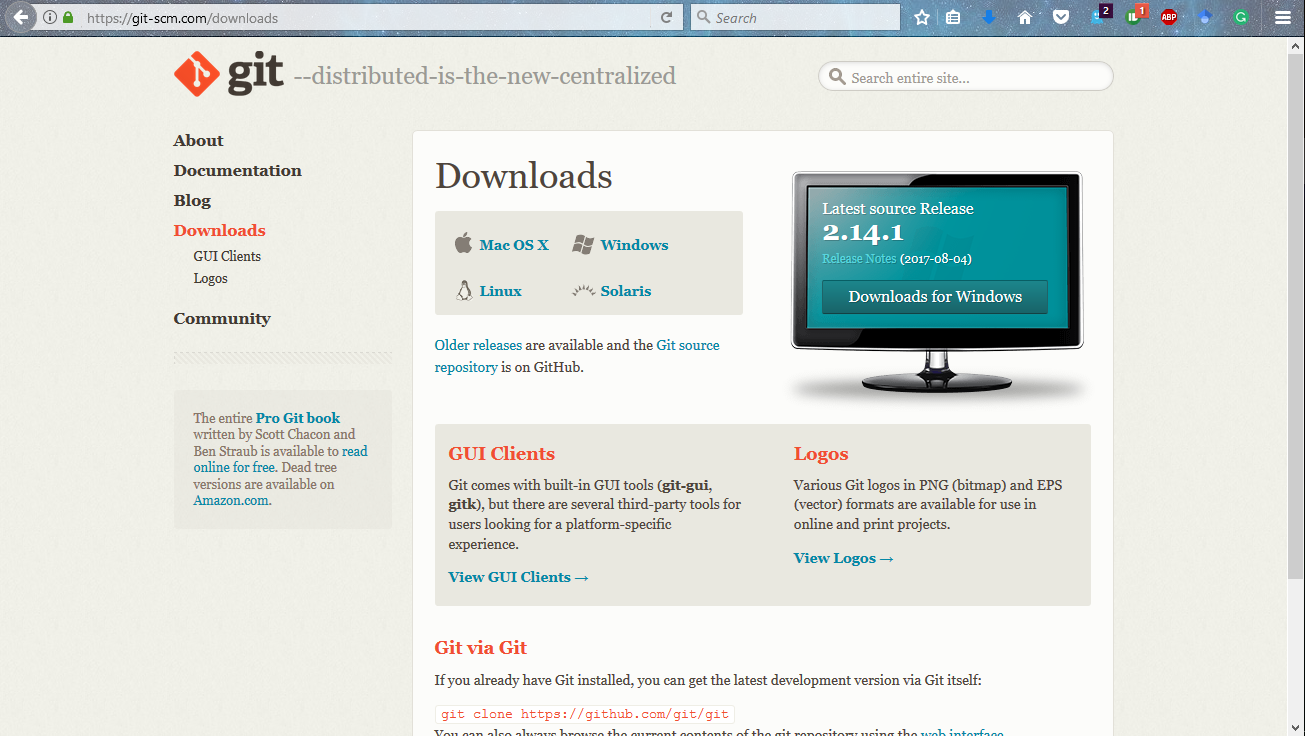

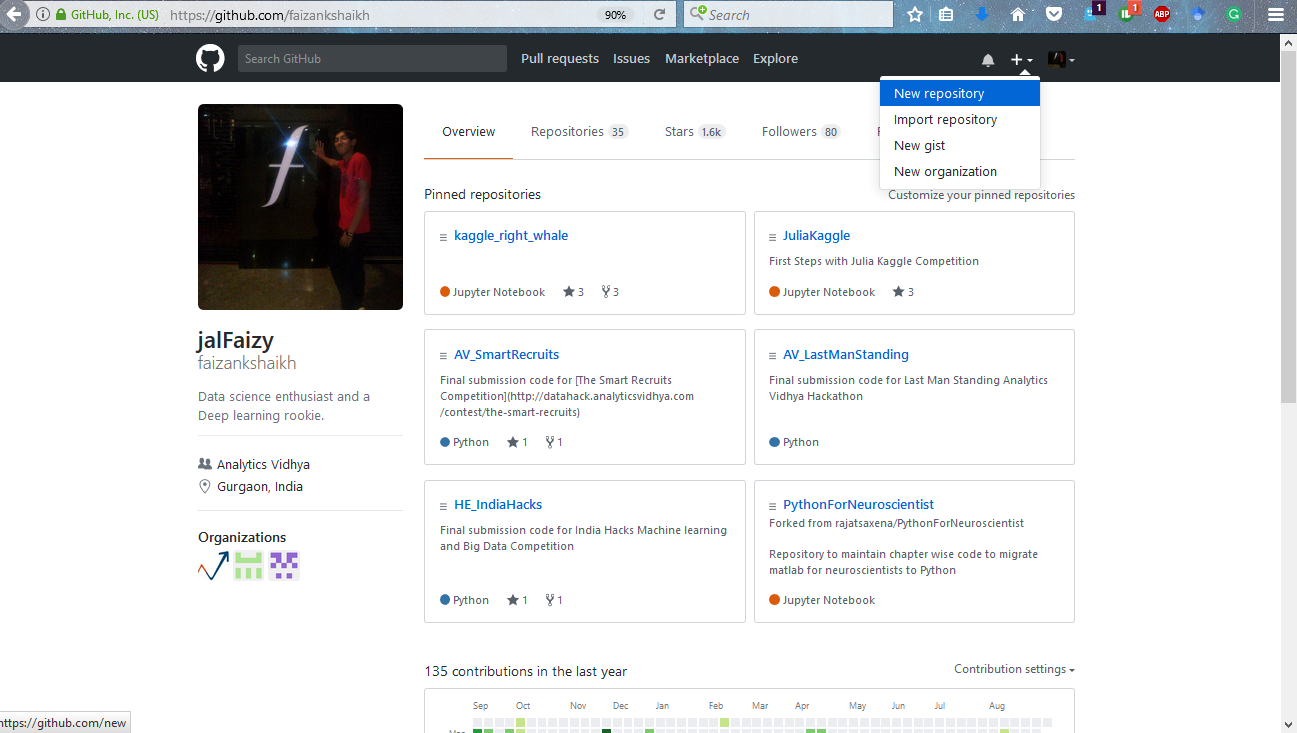

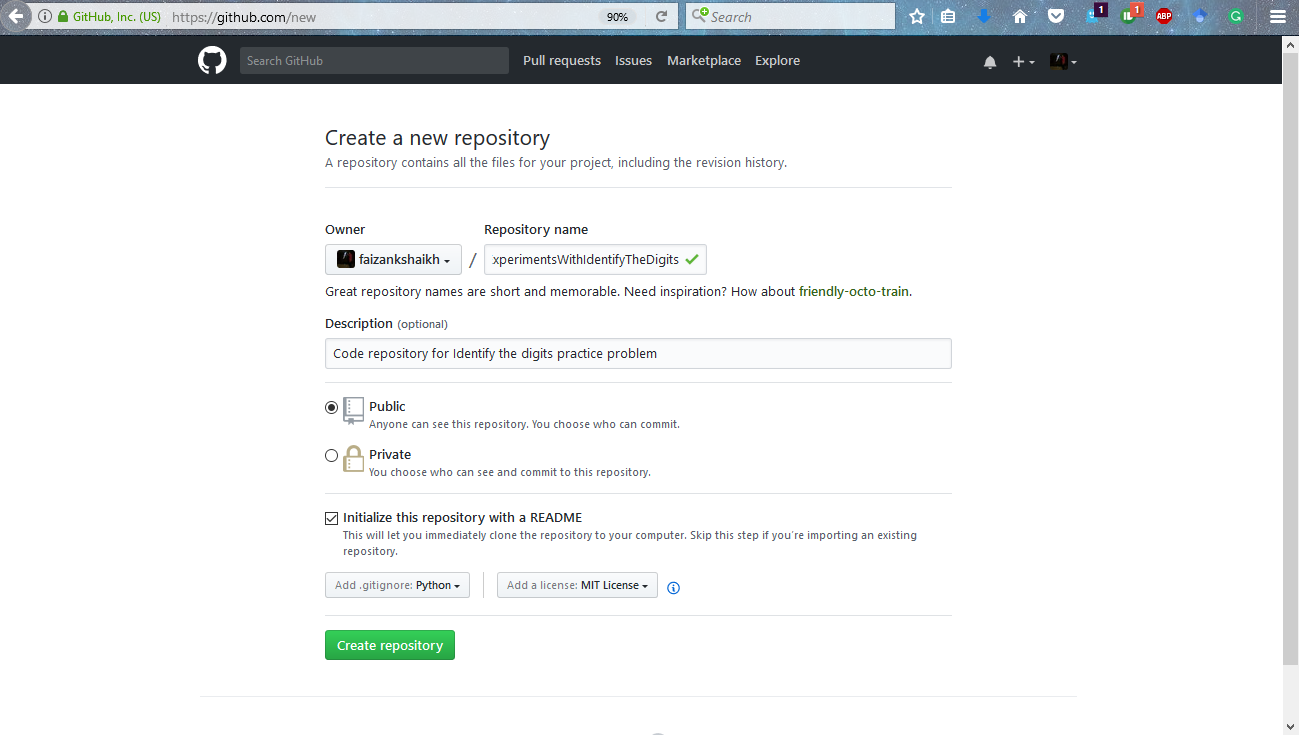

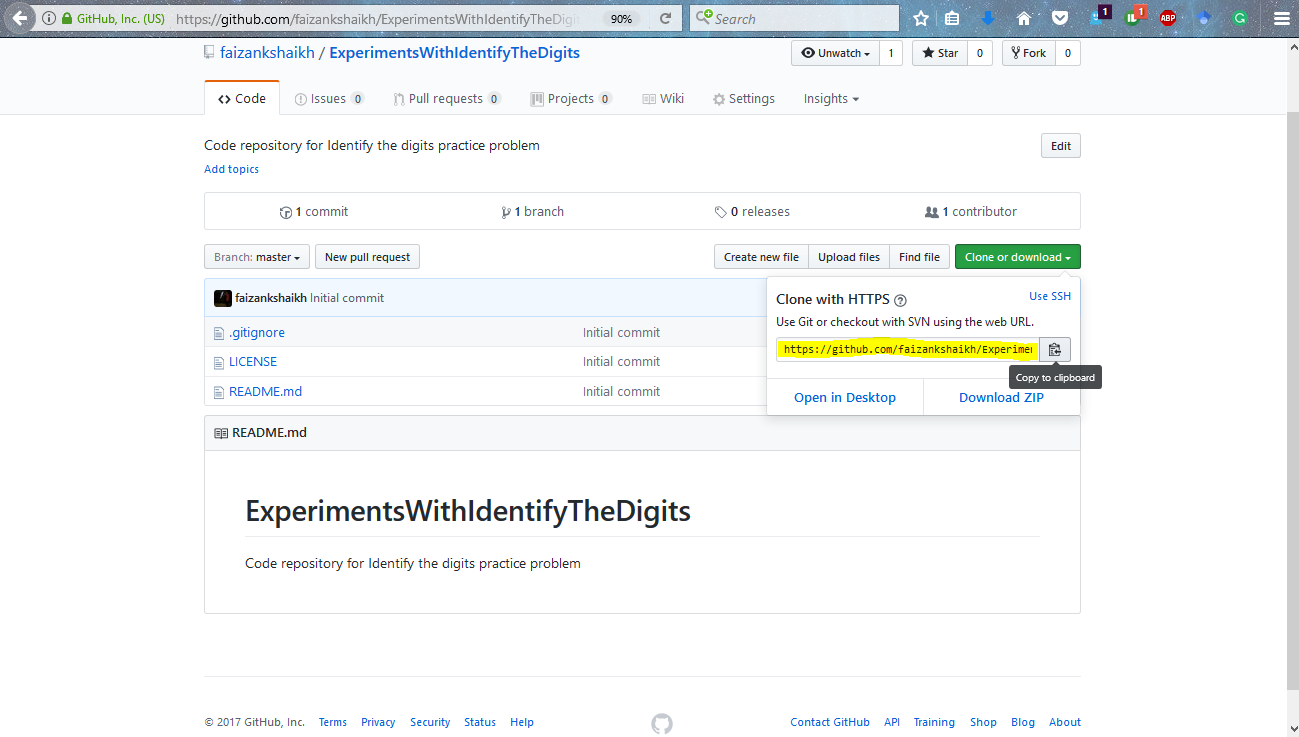

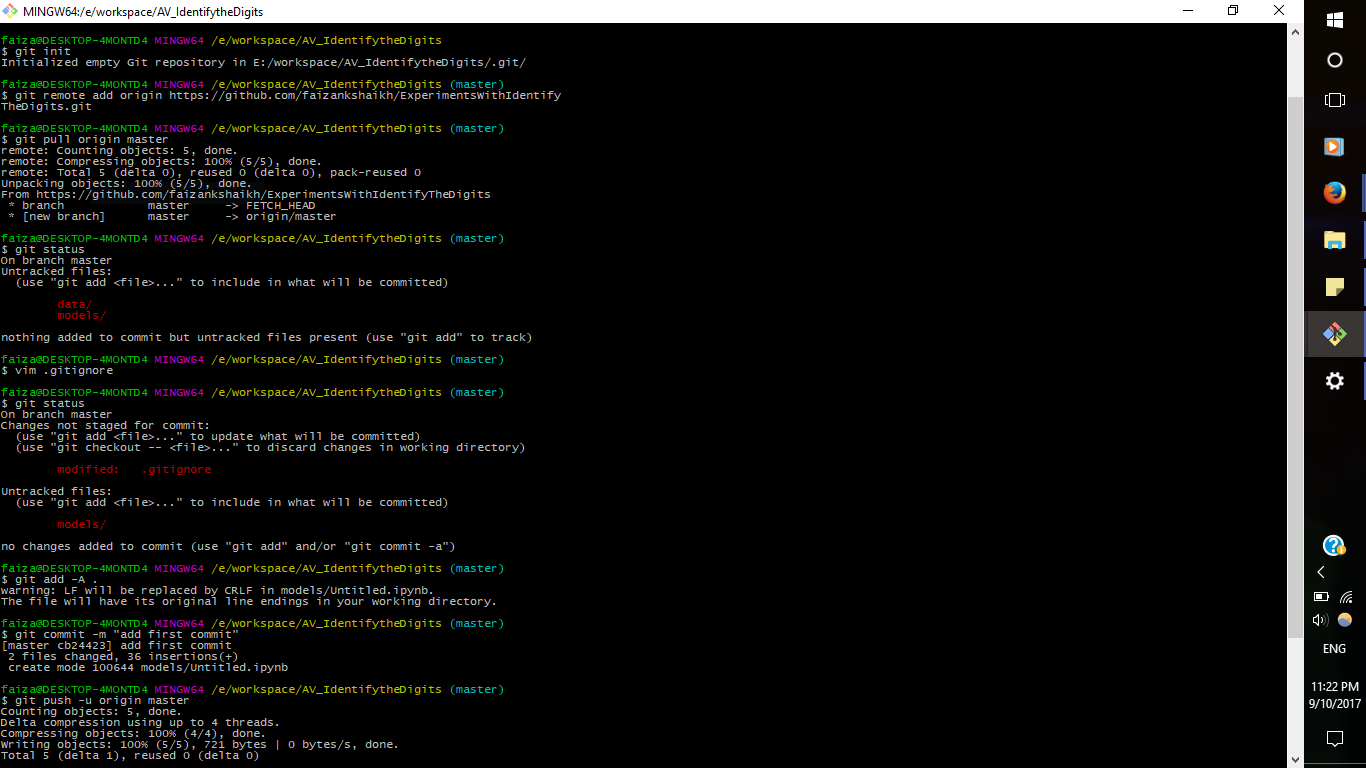

- Keeping Tab of Experiments: Version Control with GitHub

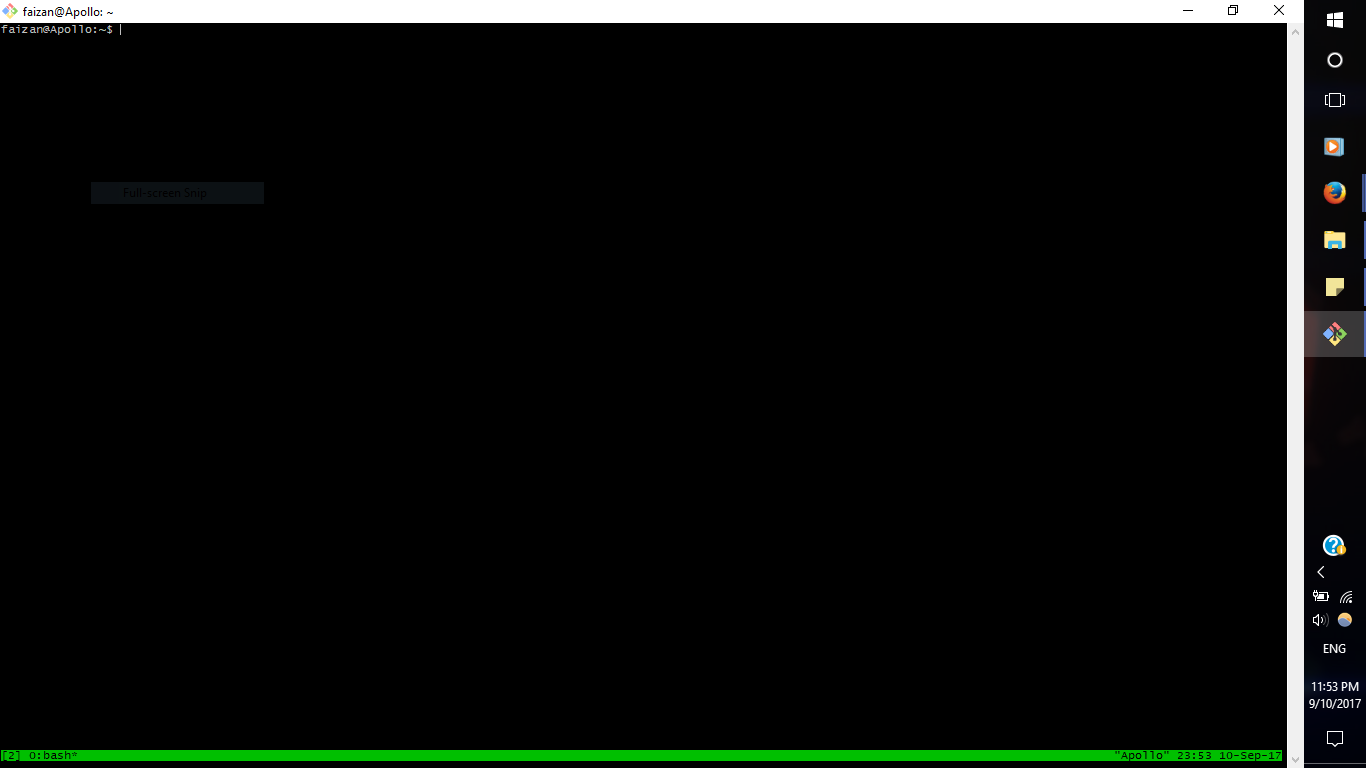

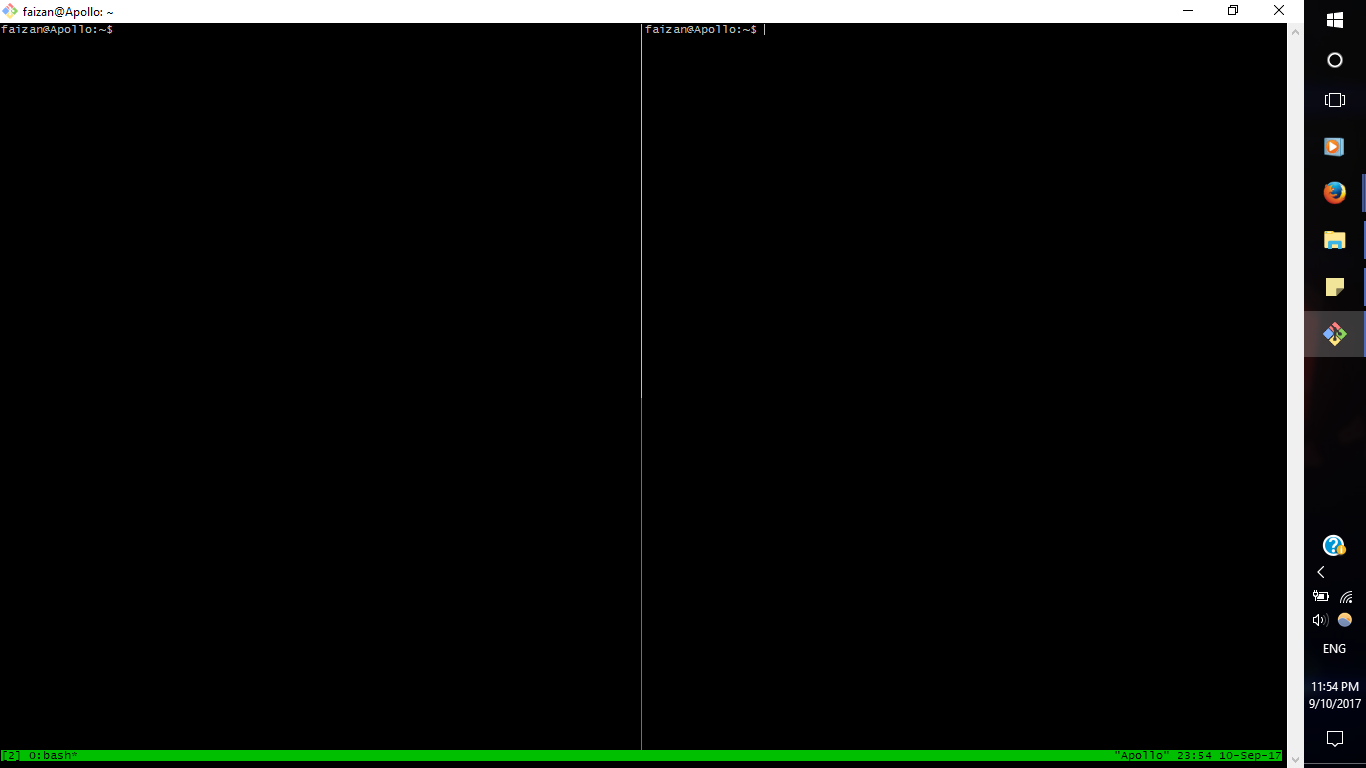

- Running Multiple Experiments at the Same Time: Overview Tmux

- Deploying the Solution: Using Docker to Minimize Dependencies

- Summarization of tools

What does a “data science stack” look like?

I come from a software engineering background. There people are inquisitive about what kind of “stack” people currently working on? Their intention here is to find which tools are trending and what are the pros and cons while using it. Its because each and everyday new tools come out which try to eliminate the nagging problems people face everyday.

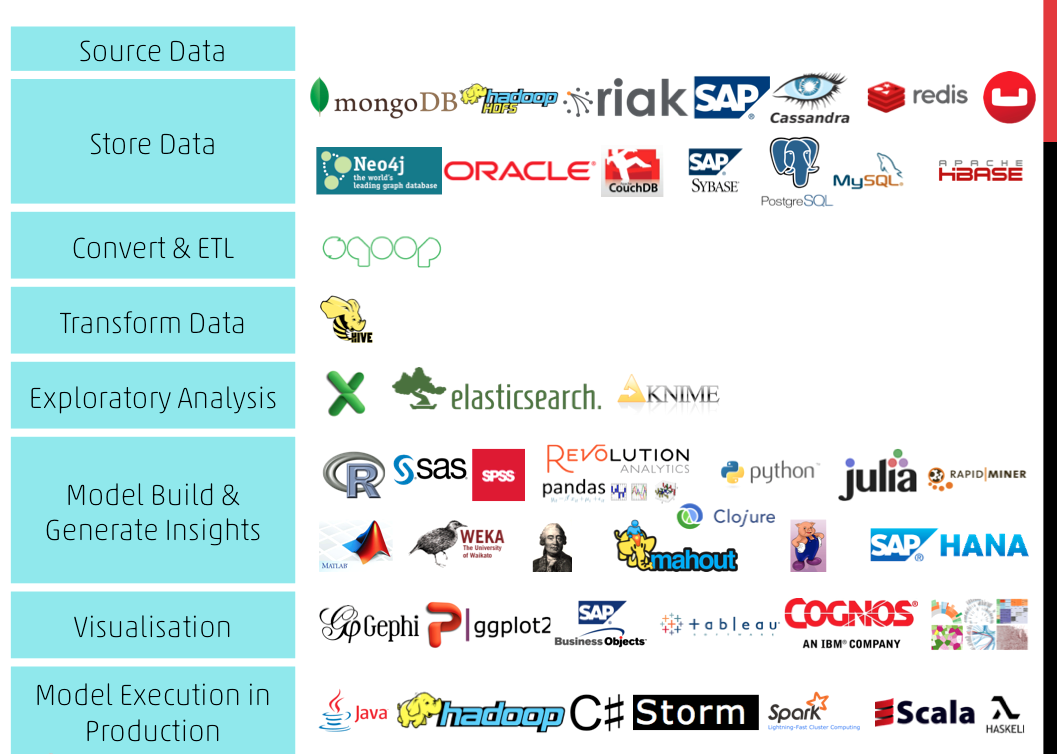

In data science, I can probably say that there is more inclination on what kinds of techniques you use to solve a problem rather than the tools to use. Still, its wise to get to know what kind of tools are available to you. A survey was done keeping this in mind. Below image summarizes these findings.

Case Study of a Deep Learning problem: Getting started with Python Ecosystem

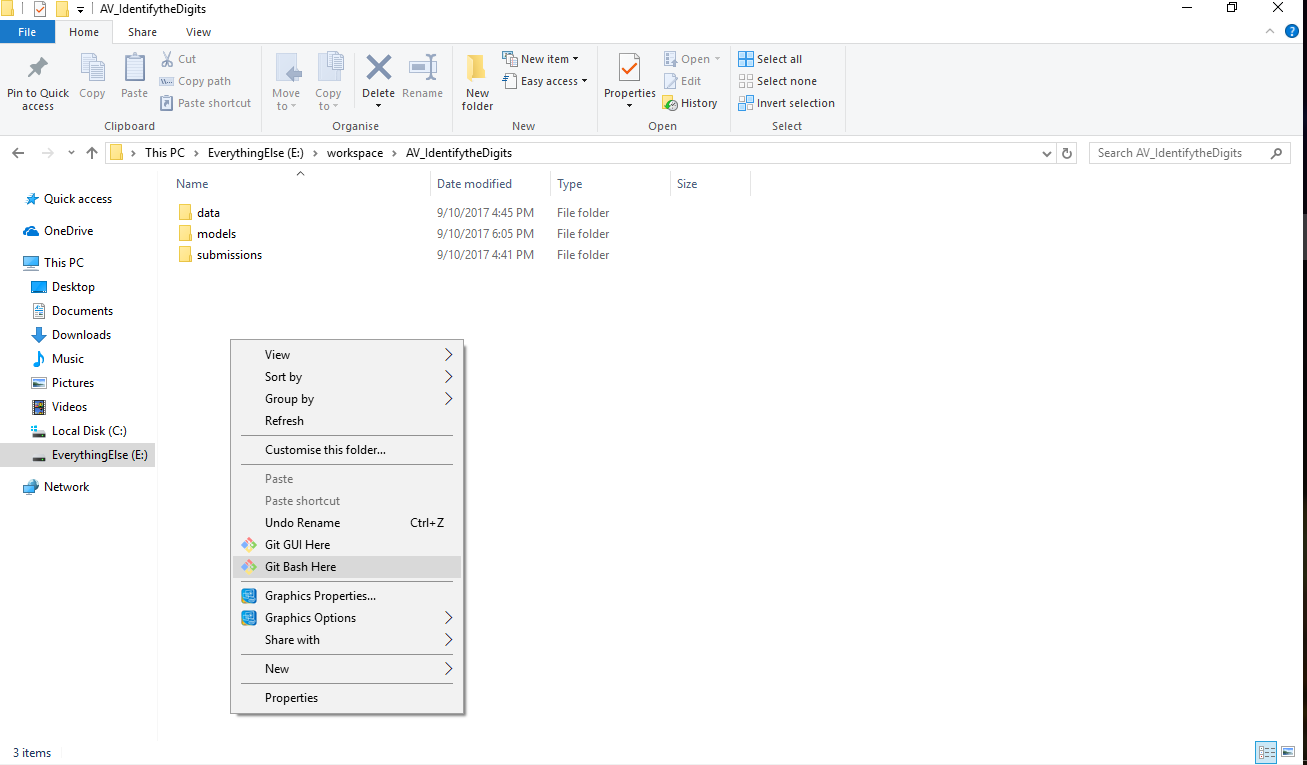

Instead of blatantly saying which the tools to be used, I will give you a rundown of these tools with a practical example. We will do this exercise on “Identify the Digits” practice problem.

Let us first get to know what the problem entails. The home page mentions “Here, we need to identify the digit in given images. We have total 70,000 images, out of which 49,000 are part of train images with the label of digit and rest 21,000 images are unlabeled (known as test images). Now, we need to identify the digit for test images.”

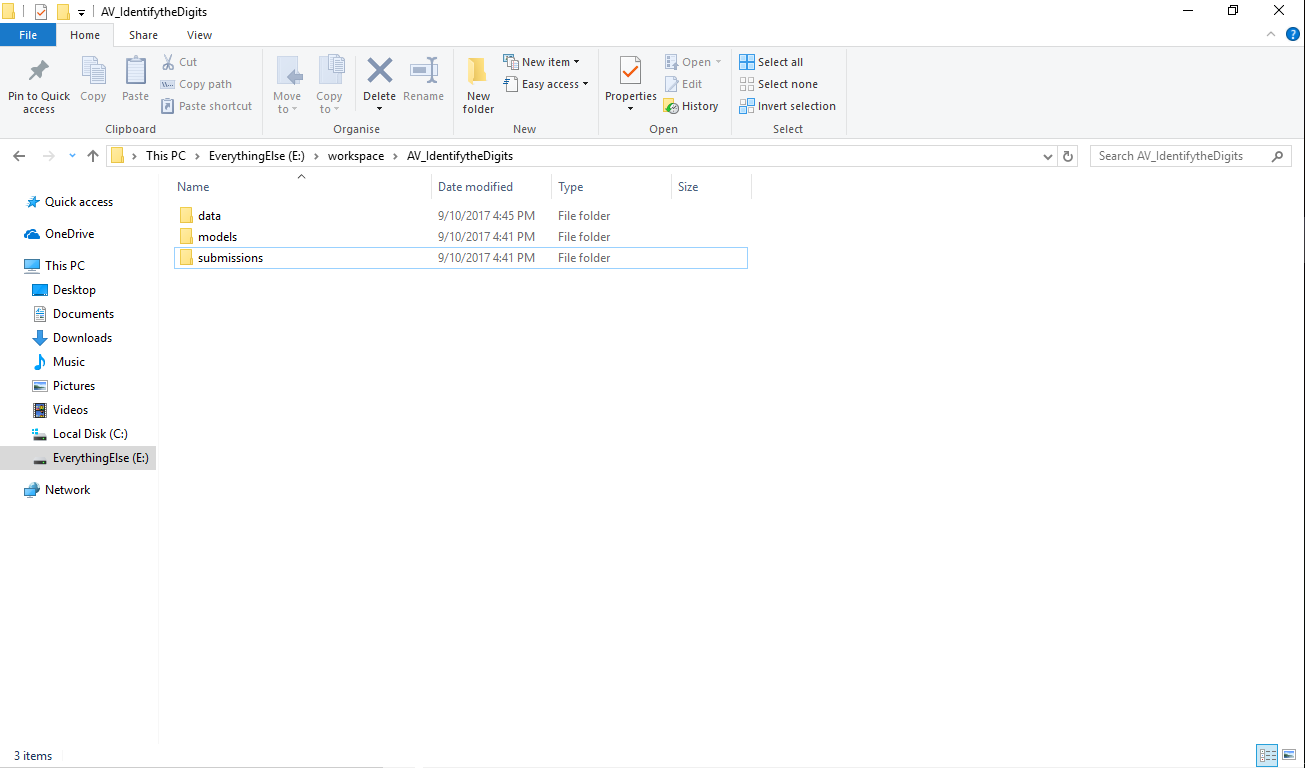

This essentially means that the problem is an image recognition problem.The first step here would be to setup your system for the problem. I usually create a specific folder structure for each problem I start (yes I’m a windows user ![]() ) and start working from there.

) and start working from there.

For this kind of problems, I have a tendency to use the kit mentioned below:

- Python 3 to get started of course!

- Numpy / Scipy for basic data reading and processing

- Pandas to structure the data and bring it in shape for processing

- Matplotlib for data visualization

- Scikit-learn / Tensorflow / Keras for predictive modelling

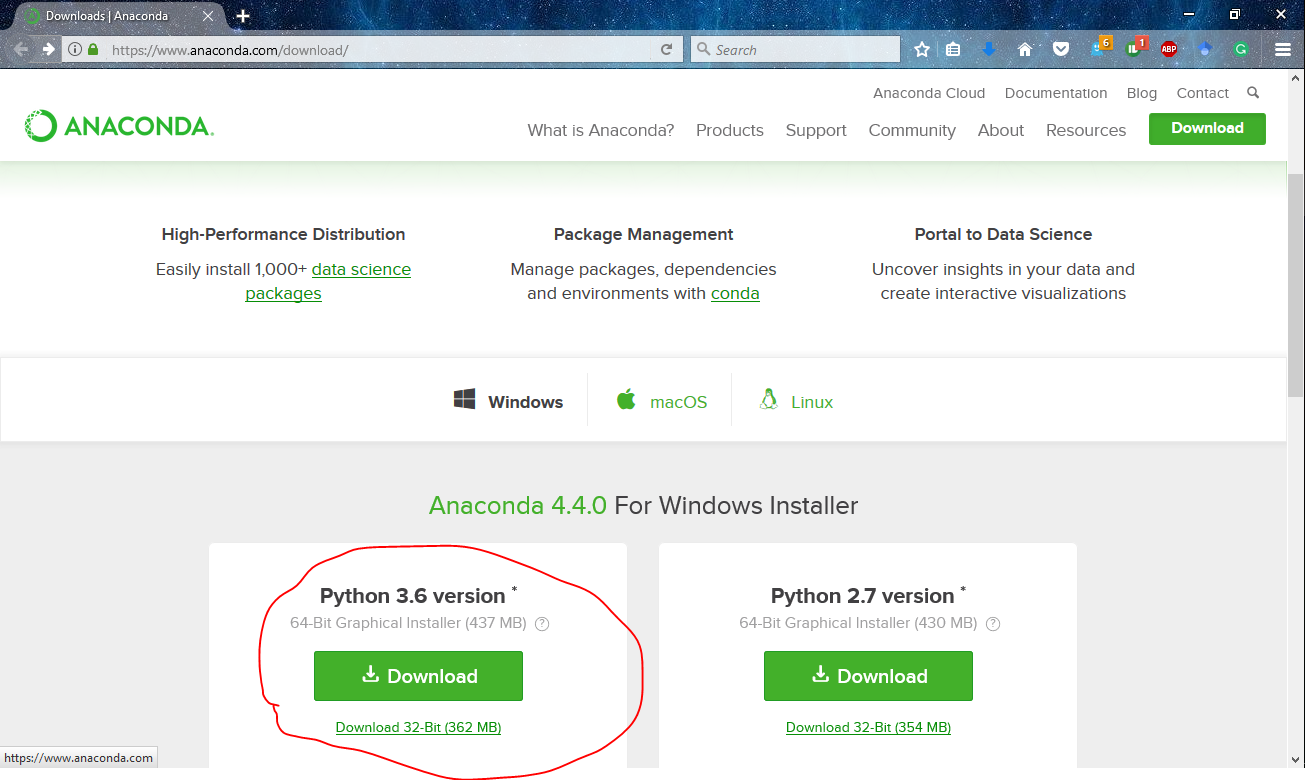

Fortunately, most of the things above can be accessed using a single software called Anaconda. I’m accustomed to using Anaconda because of its comprehensiveness of data science packages and ease of use.

To setup anaconda in your system, you have to simply download the appropriate version for your platform. More specifically, I have the python 3.6 version of anaconda 4.4.0.

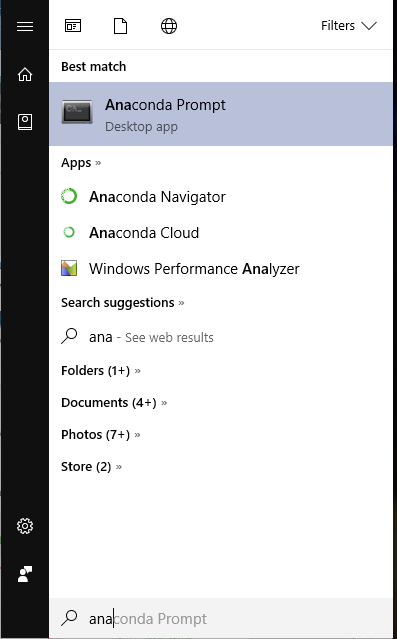

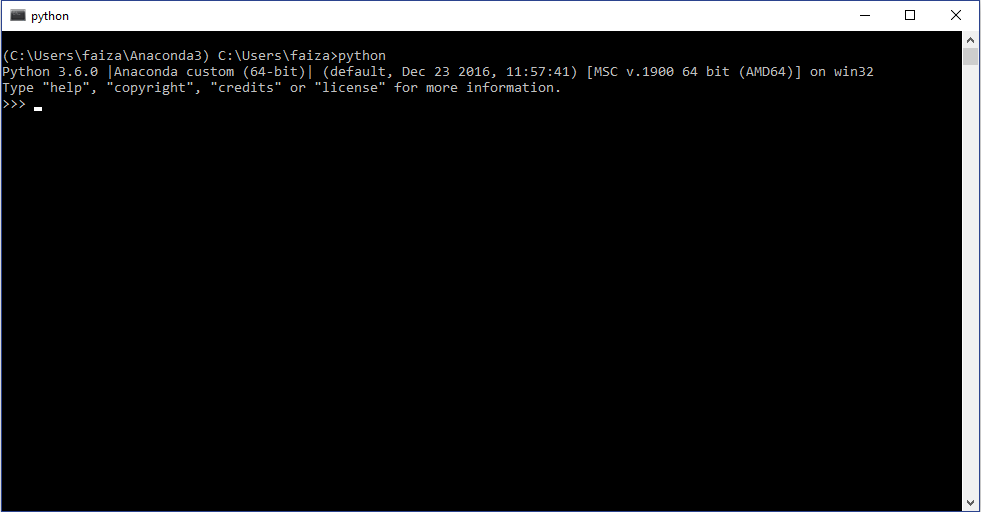

Now to use the newly install python ecosystem, open the anaconda command prompt and type “python”

As I said earlier,most of the things come pre-installed in anaconda. The only libraries left are tensorflow and keras. A smart thing to do here which anaconda provides a feature for is creating an environment. You do this because even if you do something wrong when setting up, it won’t affect your original system. This is like creating a sandbox for all your experiments. To do this, go to the anaconda command prompt and type

conda create -n dl anacondaNow not install , the remaining packages by typing

pip install --ignore-installed --upgrade tensorflow kerasNow you can start writing your codes in your favorite text editor and run the python scripts!

雷达卡

雷达卡

Step 3: Get link to the repository

Step 3: Get link to the repository

京公网安备 11010802022788号

京公网安备 11010802022788号