Transduction or transductive learning are terms you may come across in applied machine learning. The term is being used with some applications of recurrent neural networks on sequence prediction problems, like some problems in the domain of natural language processing. In this post, you will discover what transduction is in machine learning. After reading this post, you will know: Let’s get started. This tutorial is divided into 4 parts; they are: Let’s start with some basic dictionary definitions. To transduce means to convert something into another form. — Merriam-Webster Dictionary (online), 2017 It is a popular term from the field of electronics and signal processing, where a “transducer” is a general name for components or modules converting sounds to energy or vise-versa. — Digital Signal Processing Demystified, 1997 In biology, specifically genetics, transduction refers to the process of a microorganism transferring genetic material to another microorganism. — Merriam-Webster Dictionary (online), 2017 So, generally, we can see that transduction is about converting a signal into another form. The signal processing description is the most salient where sound waves are turned into electrical energy for some use within a system. Each sound would be represented by some electrical signature, at some chosen level of sampling. Transduction or transductive learning is used in the field of statistical learning theory to refer to predicting specific examples given specific examples from a domain. It is contrasted with other types of learning, such as inductive learning and deductive learning. — Page 169, The Nature of Statistical Learning Theory, 1995 It is an interesting framing of supervised learning where the classical problem of “approximating a mapping function from data and using it to make a prediction” is seen as more difficult than is required. Instead, specific predictions are made directly from the real samples from the domain. No function approximation is required. — Page 169, The Nature of Statistical Learning Theory, 1995 A classical example of a transductive algorithm is the k-Nearest Neighbors algorithm that does not model the training data, but instead uses it directly each time a prediction is required. — Learning by Transduction, 1998 Classically, transduction has been used when talking about natural language, such as in the field of linguistics. For example, there is the notion of a “transduction grammar” that refers to a set of rules for transforming examples of one language into another. — Page 460, Handbook of Natural Language Processing, 2000. There is also the concept of a “finite-state transducer” (FST) from the theory of computation that is invoked when talking about translation tasks for mapping one set of symbols to another. Importantly, each input produces one output. — Page 294, Statistical Machine Translation, 2010. This use of transduction when talking about theory and classical machine translation color the usage of the term when talking about modern sequence prediction with recurrent neural networks on natural language processing tasks. In his textbook on neural networks for language processing, Yoav Goldberg defines a transducer as a specific network model for NLP tasks. A transducer is narrowly defined as a model that outputs one time step for each input time step provided. This maps to the linguistic usage, specifically with finite-state transducers. — Page 168, Neural Network Methods in Natural Language Processing, 2017. He proposes this type of model for sequence tagging as well as language modeling. He goes on to indicate that conditioned-generation, such as with the Encoder-Decoder architecture, may be considered a special case of the RNN transducer. This last point is surprising given that the Decoder in the Encoder-Decoder model architecture permits a varied number of outputs for a given input sequence, breaking “one output per input” in the definition. More generally, transduction is used in NLP sequence prediction tasks, specifically translation. The definitions seem more relaxed than the strict one-output-per-input of Goldberg and the FST. For example, Ed Grefenstette, et al. describe transduction as mapping an input string to an output string. — Learning to Transduce with Unbounded Memory, 2015. They go on to provide a list of some specific NLP tasks that help to make this broad definition concrete. Alex Graves also uses transduction as a synonym for transformation and usefully also provides a list of example NLP tasks that meet the definition. — Sequence Transduction with Recurrent Neural Networks, 2012. To summarize, we can restate a list of transductive natural language processing tasks as follows: Finally, in addition to the notion of transduction referring to broad classes of NLP problems and RNN sequence prediction models, some new methods are explicitly being named as such. Navdeep Jaitly, et al. refer to their new RNN sequence-to-sequence prediction method as a “Neural Transducer“, which technically RNNs for sequence-to-sequence prediction would also be.本帖隐藏的内容

What Is Transduction?

transduce: to convert (something, such as energy or a message) into another form essentially sense organs transduce physical energy into a nervous signal

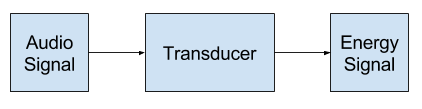

All signal processing begins with an input transducer. The input transducer takes the input signal and converts it to an electrical signal. In signal-processing applications, the transducer can take many forms. A common example of an input transducer is a microphone.

transduction: the action or process of transducing; especially : the transfer of genetic material from one microorganism to another by a viral agent (such as a bacteriophage)

Example of Signal Processing Transducer

Example of Signal Processing Transducer

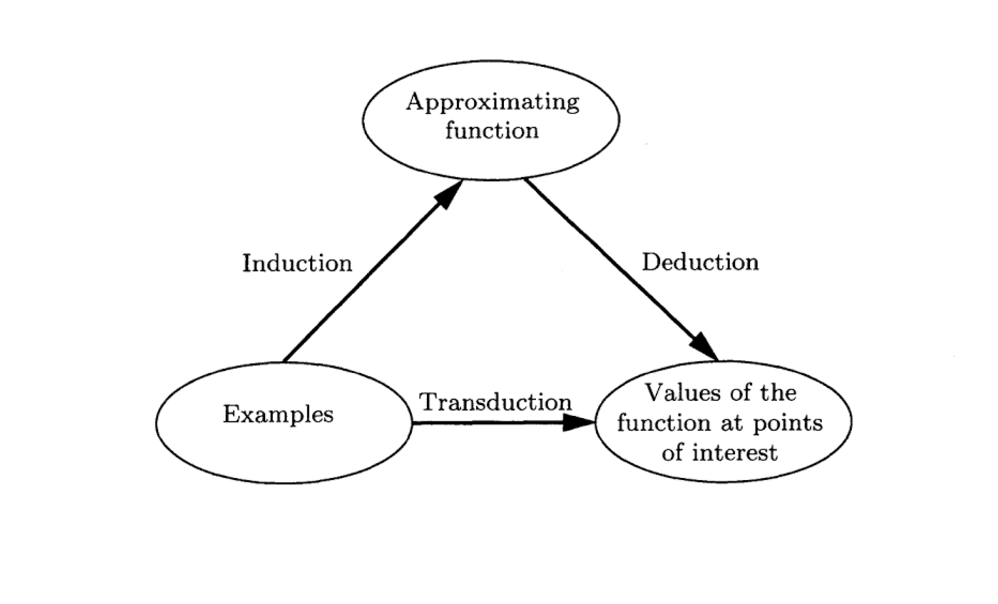

Transductive LearningInduction, deriving the function from the given data. Deduction, deriving the values of the given function for points of interest. Transduction, deriving the values of the unknown function for points of interest from the given data.

Relationship between Induction, Deduction and Transduction

Relationship between Induction, Deduction and Transduction

Taken from The Nature of Statistical Learning Theory.

The model of estimating the value of a function at a given point of interest describes a new concept of inference: moving from the particular to the particular. We call this type of inference transductive inference. Note that this concept of inference appears when one would like to get the best result from a restricted amount of information.

Transduction is naturally related to a set of algorithms known as instance-based, or case-based learning. Perhaps, the most well-known algorithm in this class is k-nearest neighbour algorithm.

A transduction grammar describes a structurally correlated pair of languages. It generates sentence pairs, rather than sentences. The language-1 sentence is (intended to be) a translation of the language-2 sentence.

A finite state transducer consists of a number of states. When transitioning between states an input symbol is consumed and an output symbol is emitted.

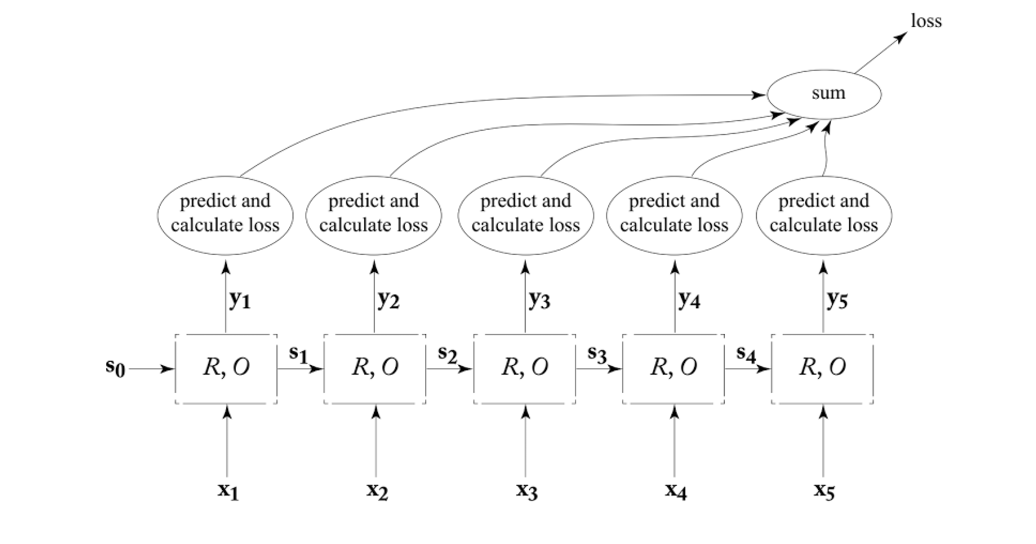

Another option is to treat the RNN as a transducer, producing an output for each input it reads in.

Transducer RNN Training Graph.

Transducer RNN Training Graph.

Taken from “Neural Network Methods in Natural Language Processing.”

Many natural language processing (NLP) tasks can be viewed as transduction problems, that is learning to convert one string into another. Machine translation is a prototypical example of transduction and recent results indicate that Deep RNNs have the ability to encode long source strings and produce coherent translations

String transduction is central to many applications in NLP, from name transliteration and spelling correction, to inflectional morphology and machine translation

Many machine learning tasks can be expressed as the transformation—or transduction—of input sequences into output sequences: speech recognition, machine translation, protein secondary structure prediction and text-to-speech to name but a few.

雷达卡

雷达卡

京公网安备 11010802022788号

京公网安备 11010802022788号