In 2016, we find ourselves awash in news about machine learning. Driving this wave of interest, a number of breakthroughs - many due to deep learning - have pushed the state-of-the-art in computer vision, speech recognition and natural language processing. Per Google Trends, searches for machine learning are up four-fold while searches for deep learning are up ten-fold over the last five years. These advances have cracked open viable paths towards primitive but economically impactful systems. Responding to this demand, our news outlets, blogs, technical magazines and newspapers alike, have struggled to keep up. Naturally, the resulting coverage and consequent discourse have both been consistently ridiculous. However, contrary to popular narrative, blame does not lie with James Cameron or with his 1984 messianic post-apocalyptic sci-fi thriller The Terminator.

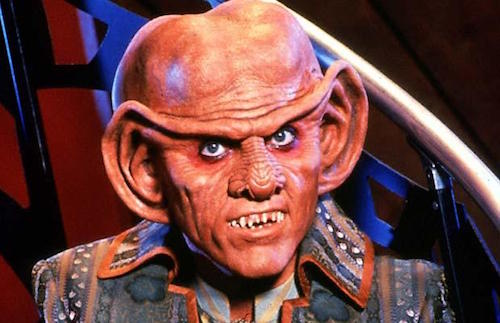

Of course, a cursory examination on the role of The Terminator in contemporary discourse on AI hints otherwise. Searches for "deep learning Terminator", "Geoff Hinton Terminator", "DeepMind Terminator", and "OpenAI Terminator" all turn up ample results. News outlets including the BBC, and New York Times frequently evoke the film, either when pondering the dangers of AI or to disspell them. And machine learning luminaries, including Yann LeCun and Thomas Dietterich are frequently put upon to comment on the plausibility of Terminator-like scenarios or other campy depictions of AI in film, as showcased by this CNET article. The same article contains an awkward and similarly unwarranted repudiation of Ex Machina, itself a fun and intelligent film that deep down has no more connection to AI than Star Trek does to exobiology.

So how can it be, despite the seemingly inextricable connection between this 1980s masterpiece and conversations about machine learning, that the blame foisted upon James Cameron and the Austrian Oak might be misplaced? To begin with, let's set some proper context. Despite world-wide popularity, neither the Pixar hitCars nor its talking-car predecessor Nightrider led millions to mistakenly believe in loquacious automobiles. Similarly, while Jurassic park took liberties with science, few would accuse the thriller of ruining news reporting on biology, or of prejudicing generations against biological research. Along similar lines, Star Trek has neither inspired absurd journalism nor threatened academic consideration of exobiology, however implausible its universe populated by intelligent humanoids.

The Methods and Impact of AI/ML Are Hard To Discuss

While The Terminator makes for a convenient scapegoat, the real culprit behind the cartoonish treatment of machine learning is the difficultly of discussing it. First, engaging deeply on the topic of machine learning requires some mixture of computational thinking and a basic understanding of linear algebra, probability, and statistics. While one needn't be a world-class mathematician to grasp the elementary ideas of machine learning, the combination of expertise required to speak reasonably about machine learning is rare, even among software developers. Of course, it's even rarer among mainstream journalists and rarer yet among the audience they address.

Second, reasoning about how machine learning will impact society may be impossible without understanding it deeply. This may seem like a tautology, but allow me to unpack the idea. For other world-changing technologies, this does not always hold. Take the automobile for instance. Even now, the majority of people who drive, myself included, lack a sophisticated understanding of the engineering principles at work in an automobile engine. However, it seems unlikely that it was ever hard to reason about how a car might affect life. Of course the scope of its economic impact might have been hard to gauge, but the fundamental mechanism is obvious. One only needs to know that they can transport objects safely at speeds of roughly 100km/h. Absent deeper technical knowledge, one could then reason about how their widespread adoption affect the shipping industry, etc. Similarly, aircraft design requires elaborate engineering, and a deep understanding of the physical and mathematical principles at play. But one needn't understand aircraft deeply to figure out that they are load-bearing tin cans that fly between cities.

Machine learning, on the other hand, represents a comparatively protean entity. The same technology can potentially translate between languages and enhance the resolution of video frames. Some developments leading to improved computer vision results are likely to cascade into seemingly unrelated fields like speech recognition, while others aren't. To form intuition over what tasks machine learning might conquer next, one might actually need to understand what principles are at play.

Silver Lining

While horrible AI coverage remains ubiquitous, sober coverage has increasingly found its way to the fore. Cade Metz of Wired has come to reliably deliver reasonable coverage on the topic, interviewing engaging with real machine learning experts and providing level-headed covering the recent triumph of Google's AlphaGo over Lee Sedol. Similarly, from the business angle, Jack Clark of Bloomberg has contributed clear-headed writing about the budding industry surrounding machine learning. I've had the opportunity both to interview for and to contribute a featured article for IEEE Spectrum. Hopefully, as more experts in the field take an interest in communicating with the wider population, and more journalists take time to specialize in the area, the coverage will continue to improve.

A Pardon for T-800

To wrap up, the poor state of public discourse on machine learning doesn't owe to The Terminator. We'd be no better off if the public instead attached conversations about machine learning to some other, less menacing film. The prominence of Terminator in the discourse represents an effect, not a cause of widespread ignorance. The public's confusion owes both to the difficulty of understanding machine learning and also to the difficulty of reasoning about its impact absent such understanding. The situation is exacerbated by paucity of journalists able to reason about machine learning, and the dearth of machine learning researchers inclined towards journalism.

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by theDivision of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs and as a Machine Learning Scientist at Amazon, and is a Contributing Editor at KDnuggets.

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by theDivision of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs and as a Machine Learning Scientist at Amazon, and is a Contributing Editor at KDnuggets.

雷达卡

雷达卡

京公网安备 11010802022788号

京公网安备 11010802022788号